Illuminating Level Creation For Free-to-play Puzzle Games

Illuminating Level Creation For Free-to-play Puzzle Games

Levels drive the core interaction in most free-to-play puzzle games. The quality of this content, and the pacing of level features, rewards, and difficulty has an enormous impact on both retention and monetization. While the overall visual presentation, simplicity in design, story, setting, a deep and resonant meta game, and the quality of retention features all have their impact – for most players, the levels are the key driver of whether they stay and play or go away.

When creating and releasing a regular stream of high-performing free-to-play puzzle levels, four best practices are crucial:

- Building a comprehensive level creation cycle (Covered in Part 1)

- Meeting all technical requirements for a good starting situation (Covered in Part 2)

- Setting reliable level design goals (Covered in Part 2)

- Being aware what makes a good level (Part 3, coming soon)

This first article details the first practice listed above. The rest will be covered in subsequent posts.

Part 1

A Strong Level Creation Cycle in 6 Phases

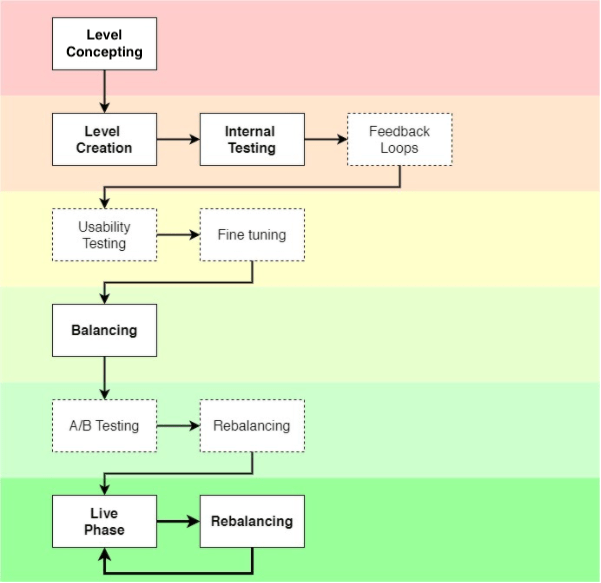

This figure shows a comprehensive breakdown of steps to achieve a properly balanced, high-performing game level. Bold components are must-haves. Dashed components are really helpful to judge and fine-tune a level’s quality based on both internal and external feedback.

Phase 1: Level Concepting

The specific way you come up with your level concepts and how you prepare these for the actual level creation in a level editor (in-engine) can vary widely based on your teams’ preferences. Whatever your process, the better you prepare the whole level concept, the faster you will realize your idea without losing time on open design questions or experimenting with single level elements or placement with an unclear concept.

I am somewhat traditional in that I prepare scribbles in a notebook including the whole level layout, obstacle placements, info on main and side goals, available moves and other variables so that the whole vision is clear, can quickly be built in the editor and is playable as early as possible. Sometimes, if you start from scratch with an empty game-board in the level editor, valuable work time is lost trying out different layouts, mechanics and obstacle combinations without knowing if everything fits together. Having a clear concept in mind (and on paper) speeds up this process substantially.

Phase 2: Level Creation, Internal Testing And Feedback Loops

Level creation is an effort-intensive and challenging process process that varies game to game. Each game will have different objectives for level sets, and different conditions, components, and levers impacting difficulty, pacing, or completion time. What is common in is that, no matter what level concepts you create, you will need talented and experienced level designers to sit down and do this level creation work to take those concepts and use them as guides to make playable content in-game. It’s reasonably common for a level-based free-to-play to have hundreds, or even thousands of levels, and each of those levels typically requires several hours of building, testing, and iteration to make it good enough to consider having it in your final product. This means hundreds, or even thousands of man-hours go into level creation for a typical level-based game.

Imagining the task in this light, you can see how well-developed and easy-to-use level design tools, and experienced level designers with strong ability to quickly create and refine this content can pay substantial dividends in terms of the quality-for-cost equation of this part of the game design and production process.

After the technical process of level creation , a useful tool to prove and judge a level’s quality and difficulty level is to follow up with internal testing and feedback loops . This, of course, requires teammates being involved or additional level designers to play your levels. Ask them to give feedback on your design and balancing so you are able to improve the whole user experience. Collect the feedback in simple sheets for the levels with columns for different people’s feedback, comments and testing results. These internal feedback loops are low-hanging fruit that, if acted upon, will improve a level’s performance, identify potential issues and better prepare it for actual user tests and live distribution.

Click HERE to view an example feedback spreadsheet

Phase 2B: Usability Testing And Fine Tuning

One of the most valuable activities you can do is to perform usability tests with real players from your defined target audience. Preferably this would happen before beginning to balance difficulty, allowing you to to improve a level’s feel by adapting layout and mechanics from actual player input. Feedback from your teammates is very useful, but it never replaces real player input. Your audience reacts very differently in terms of behavior, play time, steps taken, and perceived excitement and complexity than the gaming professionals that make up the bulk of your teammates. Most importantly, players don’t know the game by heart and aren’t as skilled as a designer that has been working on it for quite some time.

It is always exciting and rewarding to see how players react to, interact with, and finally judge your creations. This gives you a fresh view on your design and lets you fine-tune your level for your actual audience.

If you don’t have the chance to do these tests on-site, there are online tools and platforms, such as PlaytestCloud , that remotely recruit players and deliver recorded playtest sessions for review.

Phase 3: Balancing

Balancing your levels to conform to the desired overall difficulty curve and preparing them for live consumption takes the most time, but this can be mitigated with good balancing tools and workflows. Where possible, we recommend using AI/Machine Learning to auto-play levels and understand win/loss metrics before you have a large established player-base. It will never replace testing a level on your own or having actual players test your level, but it is definitely a way to get trustworthy data on your level difficulty (e.g. win rate, objective completion rate). Where it is not possible to use an AI tool, the more traditional way to do this is to team up with your available teammates and collect their data on play-throughs, and then balance based on those results before taking your levels live and analyzing early player data (for example, from soft launch player behavioral data).

An important side-note: Do not attempt to adapt your auto-play AI to live dynamic difficulty balancing! It is tempting to try to adapt this AI to manage dynamic difficulty adjustment to reduce user churn. However, developers that I have worked with that have tried this have failed to produce desirable results. This is because the AI works works against the human-designed difficulty/progression curves, upsetting the careful balance of friction vs. progression that leads to optimized retention and monetization in a free-to-play puzzle game. It is theoretically possible to develop an AI that would take in a variety of behavioral triggers to “tune” the perfect balance of friction vs. results on a player-by-player basis, however this is a much more complicated endeavor than it appears on the surface, with a high risk of failure, and a much higher cost than a good level design team and a simpler heuristic churn-prevention algorithm.

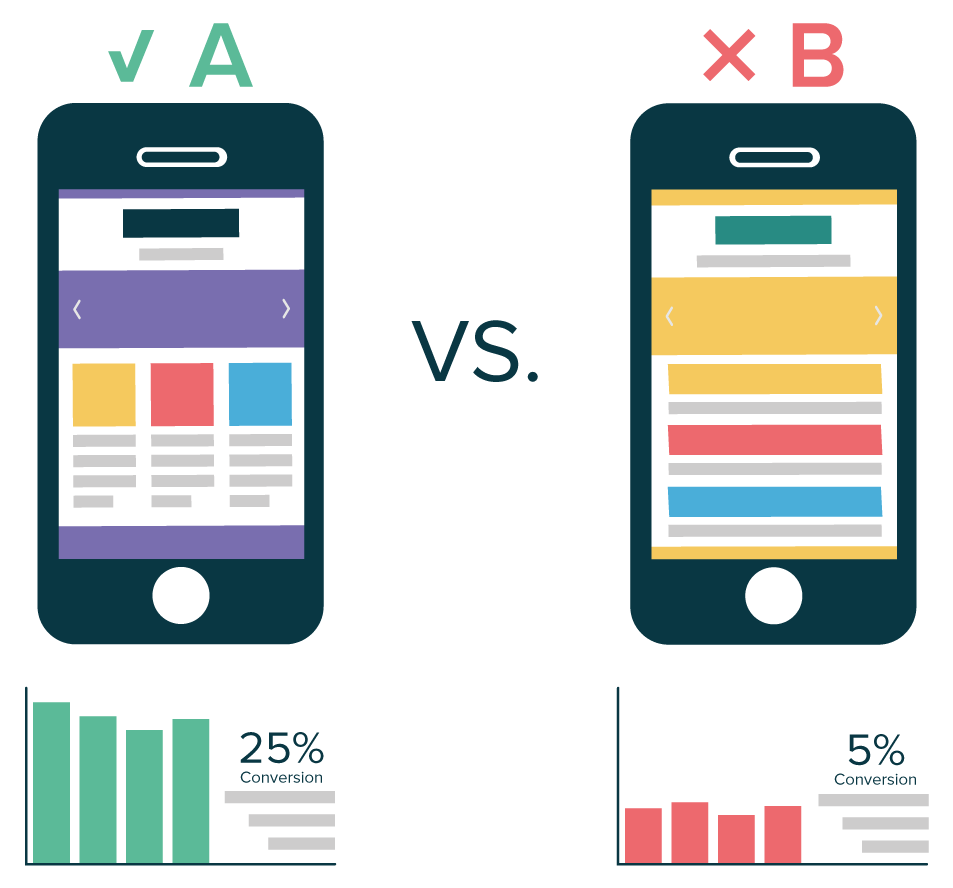

Phase 3B: A/B Testing And First Rebalancing

Testing your level balancing in A/B tests with a low percentage of players is key to deeply understanding the nature and impact of balancing changes across your full progression. Even only a few hundred players compared against the whole player base will allow you to confidently compare level design KPIs (Key Performance Indicators) like win rate, moves left when level won, or spend per level (e.g. extra moves bought, booster usage), in order to determine whether your changes are likely to positively impact player behavior.

This will speed up rebalancing and optimization in much shorter timeframes and be confident in the expected results of a change before pushing them live for 100% of your player base.

A/B testing can be enabled fairly easily using off-the-shelf analytics packages like DeltaDNA or Game Analytics , however, be aware of the GIGO rule. While A/B tests are technically reasonably easy to setup, you want to be very careful in the design of your tests and the instrumentation of your data to ensure you are accurately measuring what you think you are measuring, and are doing so in a way that will generate statistically significant results. Qualified input from a Data Analyst/Data Scientist with experience in A/B testing is highly recommended to ensure good results.

Phase 4: Live Phase and Rebalancing Loop

After a level or a level pack goes live there should be a constant rebalancing loop happening regularly. This allows for fine-tuning of level difficulty and performance based on your difficulty curve and KPI goals, and keeping the system in tune as new content is added. Meaningful gains in retention and monetization are possible only through changes in level difficulty progression, rewards, more exciting level variations, and the pace and timing of new feature utilization – so don’t underestimate the impact of ongoing attention to your level designs, even after they go live.

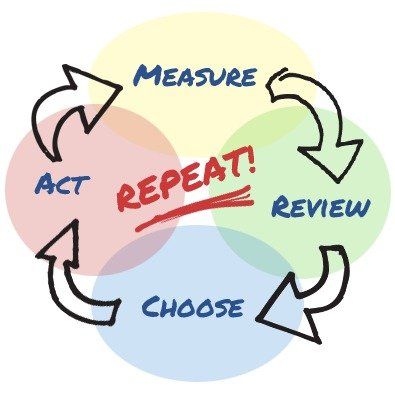

Fast, ongoing, well-informed iterations are key to long-term success

Also, managing a live game, in most cases, requires constantly extending the game to include new content. Adding this new content may change the game’s overall progression needs, including changing the optimal timing for for difficulty pinch-points relative to end-of-content. In most cases, this will necessitate constant rebalancing to reduce the difficulty of the early content (including the First Time User Experience) to push players deeper into the game so they can see its best and most interesting parts.

Part 2

In the first part, we talked about “level creation cycles” and outlined a six-phase approach for building them. We discussed concepting, internal testing and feedback loops. We talked about usability testing and balancing, and explored the virtues of automated tools. And we concluded with some thoughts regarding going live and further tweaking. In this part, we’re going to talk about common issues and goals.

Before you begin building many levels, you will likely run into a few issues such as how design is communicated, how content releases will be planned and technical obstacles that may arise. Such issues are often addressed during prototyping or early development, but sometimes they aren’t. Sometimes they linger well into production and can become a roadblock for good level and content creation. Regardless of whether they are solved quickly or not, it’s important that level designers be aware of these issues before real production begins, and plan to accommodate them. Trust us: trying to fix a level design process when it’s already on fire is a horrible task that we wouldn’t wish on anyone. Planning is everything.

The main issues that you will likely encounter are:

- Feedback cycles

- Release pipelines

- Progression pacing

- Development readiness

- KPIs

- Retention goals

Photo by KOBU Agency on Unsplash

Feedback Cycles

Design teams are often split into sub-groups based on their sub-discipline. A common split exists between game and level designers, for example, but sometimes there are further divisions of content, narrative, system or economy designers. Design is usually a shared vision as a result, one that requires extensive communication and collaboration to be realized. All groups must work together to achieve the desired user experience, game feel, progression and difficulty. But it’s very easy for them to fall out of sync.

Disconnects often arise over time, usually because they go unnoticed. Sometimes design teams fall out of sync because they were hired at different times and have grown used to working on their part of the game in certain ways. Sometimes they work on such different parts of the game that they don’t naturally have much contact with each other. Sometimes the issue is to do with location, for example level designers building content in one office and systems designers balancing economies in another. And sometimes the problem is one of perceived pecking order, where one type of designer, or one person, is considered “the boss”, such as a game designer who routinely ignores level designers’ needs (or vice versa).

None of these disconnects are productive, and sometimes they even become actively disruptive. Good teamwork and structure are essential as a result, and that means a good feedback cycle should be mandatory.

“Feedback cycle” is a fancy way of saying that designers of all types should regularly meet and play each other’s work. As levels are deployed, game designers should be testing them. As economic balance is updated, level designers should be trying the new balance against their existing levels to see if it fits. If a game mechanic is tweaked, all designers should be examining its impact. All opinions on quality should be heard and all worries about integration into the main game should be aired. (All with professional respect).

Feedback cycles should be very regular, as this serves iteration. Try to ensure that there is always time set aside in the production schedule for feedback between designers and stick to it. For example you could create a regular Feedback Friday event on the calendar, where team members play each other’s work and then meet to discuss issues.

Always work to ensure that your feedback cycle is about evaluating work in progress, to encourage team members to submit their materials rather than be protective of finished products. (It’s generally a bad idea to allow a designer to disappear “into the cave” to work on levels or systems for long periods of time with no feedback.) Encourage objective assessments rather than opinions, and constructive feedback rather than criticism. Feedback should always be in service of building others’ work up rather than tearing it down.

Photo by Sebastian Coman Photography on Unsplash

Release Pipeline

Designing levels can be like running a busy restaurant, not just about making one perfect dish but figuring out how to deploy thirty dishes for a hungry audience and yet maintain a high bar. It’s about creating a release process that pushes content of a consistent quantity and quality.

This requirement for consistency is especially important for mobile games. Many mobile games need a lot of level content because they’re generally less difficult to play than games on other platforms. Players often burn through new content faster than you think they will, and so if there are long gaps between one content release and another, there is a strong risk of churn: Players who believe they’ve maxed out their enjoyment of a game tend to stop playing, and it’s hard to reacquire them later.

So as a beginning step, it’s always good to have more than one level designer on a team to be able to serve the need for content, as this reduces risk to deployment (quantity). It’s also good to have a clear target in mind for the amount of content you intend to deploy. On a puzzle game, for example, releasing 20 levels per week is a good target as this provides 5 hours of play to most players.

You need to think about how your level creation cycle will serve that need while maintaining quality. Do you assign a team lead to vet for quality and guard the release schedule, for example, like a head chef in a restaurant? That can sometimes be good or bad, but is very dependent on that one individual and can be a cause of burnout. Another option is to defer responsibility to the team, where each designer plays the other’s work. However be careful not to overly systematize this part of the process (such as with grading or other objective assessments) as doing so often breeds disconnection.

No release pipeline ever gets it right the first time, by the way, but with some adjustments they tend to achieve a regular cadence that works both for the team and the audience. A key consideration is whether to commit to a cycle based on time or volume. Do you guarantee to release every Thursday with whatever levels are ready? Or do you guarantee release once 20 good levels are ready? Generally you’ll need to pick one philosophy or the other, as this will form the basis of your audience’s expectations and affect how your pipeline works.

Progression Pacing

A further consideration is how progression itself paces, meaning the difficulty of levels, the curve that the game follows and when or how it increases or decreases. For example if the game designers invent a new object that clears rows of a puzzle, that has considerable knock-on effects to the design of all future puzzles. It fundamentally changes how the players approach the game, and therefore its difficulty. With a single change like this, many puzzles that would have previously been very difficult might suddenly become very easy.

In order to map the pace of progression, many teams aim for a “ saw curve ”, where difficulty raises throughout play in a series of peaks and valleys. A boss level, for example, is often a peak. On the other hand the introduction of a new mechanic is often a good time for a valley, an easy level or two that allows for a learning phase. Our row-clearing object, for example, should come with a couple of easy levels that let players get the hang of it before ramping up the difficulty once more.

A difficulty saw , created by David Strachan. Used with permission.

It’s also generally good practice to push game designers to deliver clearly defined and detailed documentation that describes content overview and distribution. This kind of material helps level designers to plan ahead, to anticipate impacts and craft saw curves that will help design changes shine. Such documentation should include all available information on game progression, new obstacles and mechanics and at which level each of these is intended to be introduced. It also helps if level designers can get access to prototypes and early builds of mechanics to see how they will likely behave, and give feedback on whether they might run into unforeseen problems.

The clearer the overall game progression and the more that level designers can anticipate its consequences, the better resulting levels will be.

One other thing: Progression pacing can also have significant consequences on release timing. For example level designers might need more time to work with a significant game design change in order to start creating great quality levels, and so sticking to a weekly release schedule might be impractical if quality is to be maintained. It’s also possible that a big change might become a significant marketing event, such as running a new ad campaign for a cool mechanic, or even getting some media coverage. That could slow down the release schedule or increase the demand for great quality levels, both of which impact the level design process.

Photo by Braden Collum on Unsplash

Development Readiness

Pre-release, many games are not ready. In some cases this means that a few features take a development team longer to deliver than anticipated. In others they’re building the rocket while going to space by trying to create an engine, tool and game all in one simultaneous push. It’s more common than you might think, and a regular source of trouble for level designers. It’s extremely difficult to create a level for a mechanic that doesn’t yet work, for example, or for an obstacle that has yet to be implemented.

Obviously this is not ideal and something that level designers should push against. Development of key objects and systems should always be completed and tested before a new level is created using them. Artists and programmers should always finish a new piece of content to at least a functional standard before level designers include it in their levels. Otherwise level work will end up having to be redone.

The same applies to tools. Just as mechanics and systems may not yet be ready, many games are hampered by the need to build required tools. Crafting a level without a comprehensive editor can be very painful, for example, as can trying to define a set of KPIs without the requisite analytics hooks in place to test if they actually work. A comprehensive editor (or similar tool) that offers a playable version of the game is always best to have, because it allows you to directly test your levels on a device and get a first hands-on impression of what works and what doesn’t. Whether that’s using an in-house tool or a commercial engine like Unity or Unreal, working tools make level designers work five times faster than broken tools, and immeasurably aid the release pipeline.

If readiness remains an issue, however, there are two things that level designers can productively do. The first is to create a plan for content that will probably work when tools, objects and other items are ready, and to make sure that that plan is detailed and surfaced to the game’s producers so they understand likely impacts of further delay.

The second is to work on prototype levels, meaning a few basic levels that explore what working objects and tools in the game actually do. Level designers should avoid moving into full production at this point (if possible) as that will create compound problems down the road, but actively working to build demonstration levels and other support materials is usually good. Such demos and materials really help engineers and other team members to understand level designer needs. If the level design team is very clear about its requirements and can demonstrate prototypes that show them in action, everyone knows what to aim for.

Photo by William Warby on Unsplash

KPIs

It’s also important to anticipate the key performance indicators (KPIs) that you will likely need to track the impact of your levels. This is especially important for considering how the live phase of deployment and rebalancing will work (as we discussed in the previous article). Here are some KPIs to consider, but you’ll also want to think about unique KPIs for your game:

- Win and fail rates

- Number of moves left (if applicable) before a level is lost

- Percentage of level objectives completed (won or lost)

Knowing this kind of information will help you empirically make decisions to tweak your levels, such as modifying layout, cutting or enhancing obstacles, adjusting available moves or spawn rates, reframing level goals and much more.

Photo by Brett Jordan on Unsplash

Retention Goals

Besides meeting all technical requirements, it’s good to set some general design goals as a reliable foundation for level production. Design goals can be a kind of yardstick against which to measure level designs, to see whether the game takes shape as expected.

We generally recommend trying to tie your design goals to realizable KPIs where possible, including all important activity, but especially those that concern retention. As mentioned above players often have a hunger for new content, and so it’s important to keep supplying them with new and fun gameplay experiences to explore. They will only come back (retention) as long as a game is interesting to them, and this is especially true for so-called “most valuable” players. It’s the job of the level designer to keep serving those slices of interestingness to retain players.

New level releases should increase retention and keep players motivated, always enhancing the game experience. Pay attention to those awesome players who might have reached the end of content and are waiting for more. Offer new gameplay, obstacle combinations and visually appealing content. But remember that retention strategies are not just about increasing difficulty. In fact excess difficulty can drive players away. If players feel they cannot beat a level because too much is going on, they’ll leave. Similarly if they do not feel skilled enough to beat the level, or the pre-set win strategy is too linear, or too dependent on luck, they’ll leave.

Great level design can feel like walking a tightrope, where too much or too little of anything one thing can upset the balance of the game and cause it to fail. Try to always keep a sense of balance. Slowly ramp up the difficulty curve with proper tutorials and regular relief phases. Make sure that you’re always following this classic loop:

- Learning/relief levels

- Build-up levels

- Climax or boss level

- Goto 1

And most importantly: A level should always feel beatable without using boosters or extra moves. If this is not possible for the player, if they do not feel they can have enough moves to master a level, or otherwise have no faith that they can succeed, they will churn.

Quick Links

Services

Join Our Newsletter

We will get back to you as soon as possible.

Please try again later.

All Rights Reserved | Mobile Game Doctor | Accessibility | Privacy Policy | Terms & Conditions